Overlap in computer modeling holds key to next-generation processing, Virginia Tech researchers find

Exascale computing — the ability to perform calculations at 1 billion billion per second — is what researchers are striving to push processors to do in the next decade. That’s 1,000 times faster than the first petascale computer that came into existence in 2008.

Achieving efficiency will be paramount to building high-performance parallel computing systems if applications are to run in environments of enormous scale and also limited power.

A team of researchers in the Department of Computer Science in Virginia Tech’s College of Engineering discovered a key to what could keep supercomputing on the road to the ever-faster processing times needed to achieve exascale computing — and what policymakers say is necessary to keep the United States competitive in industries from everything to cybersecurity to ecommerce.

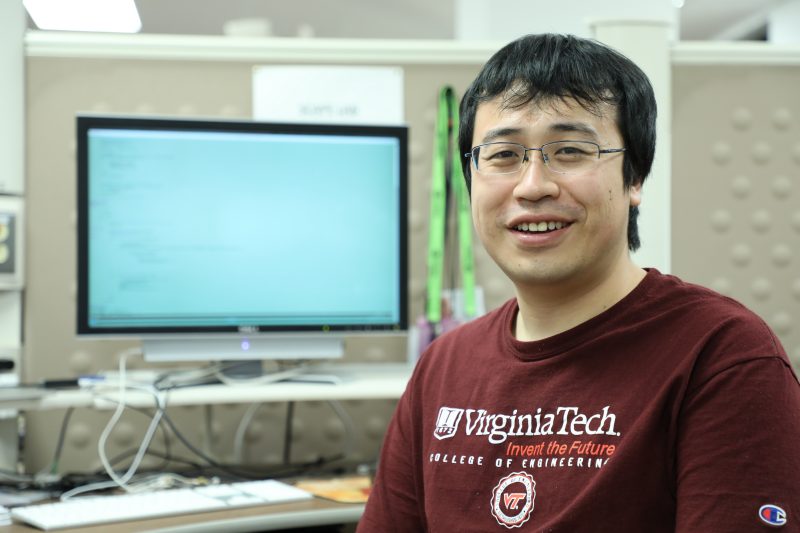

“Parallel computing is everywhere when you think about it,”said Bo Li, computer science Ph.D. candidate and first author on the paper being presented about the team's research this month. “From making Hollywood movies to managing cybersecurity threats to contributing to milestones in life science research, making strides in processing times is a priority to get to the next generation of supercomputing.”

Li will present the team’s research on June 29 at the Association for Computing Machinery’s 26th International Symposium on High Performance Parallel and Distributed Computing in Washington, D.C. The research was funded by the National Science Foundation.

The team used a model called Compute-Overlap-Stall (COS) to better isolate contributions to the total time to completion for important parallel applications. By using the COS model they found that a nebulous measurement called overlap played a key role in understanding the performance of parallel systems. Previous models lumped overlap time into either compute time or memory stall time, but the Virginia Tech team found that when system and application variables changed, the effects of overlap time were unique and could dominate performance. This led to the realization that the dominance and complexity of overlap meant it had to be modeled independently on current and future systems or efficiency would remain elusive.

“What we learned is that overlap time is not an insignificant player in computer run times and the time it takes to perform tasks,” said Kirk Cameron, professor of computer science and lead on the project. “Researchers have spent three decades increasing overlap and we have shown that in order to improve the efficiency of future designs, we must consider their precise impact on overlap in isolation.”

The Virginia Tech researchers applied COS modeling to both Intel and IBM architectures and found that the error rate was as low as 7 percent on Intel systems and as high as 17 percent on IBM architecture. The team validated their models on 19 different applications as benchmarks. The application benchmarks used the following code: LULESH, AMGmk, Rodinia, and pF3D.

“This study is important to all kinds of industries who care about efficiency,” said Li. “Any entity that relies on supercomputing including cybersecurity organizations, large online retailers such as Amazon and video distribution services like Netflix, would be affected by the changes in processing time we found in measuring overlap.”

One of the challenges in the study was “throttling” three elements: central processing unit speed, memory speed, and concurrency, or running several threads at once. Throttling refers to a sequence that causes the computer to be idle for several cycles. This is the first paper to evaluate the simultaneous combined effects of all three methods.

Parallel computing in the exascale realm has the potential to open up so many new frontiers in myriad areas of scientific research, it is almost boundless. Understanding overlap and how to make computers run their most efficiently will be a significant key to achieving the computing power required to run massive amounts of calculations in the not-too-distant future.

Writtten by Amy Loeffler

.jpg.transform/m-medium/image.jpg)