Researchers from music and engineering team up to turn big data into sound

A unique collaboration between a music professor and an engineering professor at Virginia Tech will result in the creation of a new platform for data analysis that will make it possible to understand the significance of data by turning it into sound.

Marking the first time a research project led by a faculty member from the university’s School of Performing Arts working in collaboration with the College of Engineering has received funding from the National Science Foundation, the work combines elements of music, geospatial science, computer science, and human-computer interaction.

Ivica Ico Bukvic, associate professor of composition and multimedia in the College of Liberal Arts and Human Sciences, and Greg Earle, professor of electrical and computer engineering, will leverage unique infrastructure provided by the Institute for Creativity, Arts, and Technology to investigate how immersive sound can be used to develop a better understanding of complex systems.

This is a pioneering approach to studying spatially distributed data. Instead of placing information into a visual context to show patterns or correlations – meaning, data visualization – the work will use an aural environment to leverage the natural affordances of the space and the user’s location within the sound field.

Data sonification, which involves converting non-auditory information into sound, is a relatively unexplored area of research, yet provides a unique perspective for exploring data. The human auditory system has a superior ability to recognize temporal changes and patterns, making sonification a powerful tool for studying complex systems.

“Identifying new time and space correlations between variables often leads to breakthroughs in the physical sciences,” explained Bukvic, who also serves as a senior fellow for the Institute for Creativity, Arts, and Technology. “It makes sense that we would want to go beyond two-dimensional graphical models of information and make new discoveries using senses other than our eyes.”

Titled “Spatial Audio Data Immersive Experience (SADIE),” the project is the first large-scale endeavor focusing on immersive spatially-aware sonified data using a high-density loudspeaker array. The research will focus on the earth’s upper atmospheric system, which features physical variables that are spatially and temporally rich. Each of the data sets associated with this system will be represented by distinct sound properties, such as amplitude, pitch, and volume.

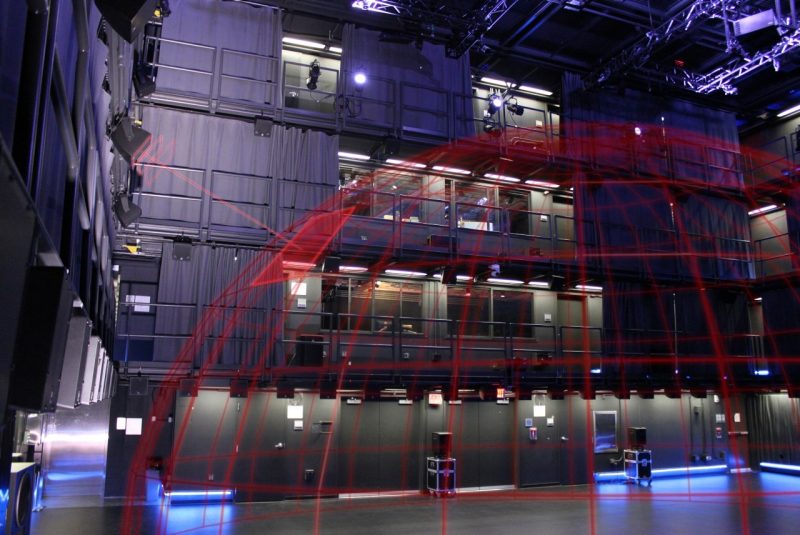

These sounds will be played through a 129-loudspeaker spatially distributed immersive sound system in the Cube, located in the Moss Arts Center. A combination of performance space, research laboratory, and studio, the Cube is a collaborative research facility at Virginia Tech where researchers, composers, and musicians are uncovering new possibilities in immersive sound.

Using the Cube’s motion capture system, similar to the interface depicted in the science fiction film “Minority Report,” participants will be able to navigate the sonified data using a gesture-driven interface, which will allow them to rewind, fast-forward, rotate, zoom, amplify, speed up, and slow down the data playback. The system will also be used to capture critical user study data.

Allowing the brain’s innate signal processing mechanisms to identify specific features in complex data sets is a logical way to link computational sciences with human sensory perceptions. This merging of technology and nature could further current analysis techniques and foster new breakthroughs involving complex systems in science, with the potential to produce new technologies designed to spur creativity.

This exemplifies the kind of work already happening within the School of Performing Arts’ Creative Technologies in Music program at Virginia Tech. This new option in the music degree was created for students interested in exploring interdisciplinary research that integrates science, engineering, art, and design.

If this novel approach to experiencing data can improve people’s understanding of complex relationships in physical systems, it could be applied to other fields of study, such as thermodynamics, quantum mechanics, and aeronautical engineering; help advance visualizations and virtual reality systems; and create interdisciplinary bridges between scientific communities, including music, computing, and the physical sciences.

.jpg.transform/m-medium/image.jpg)