Biocomplexity Institute creates computing center to solve science's most complex problems

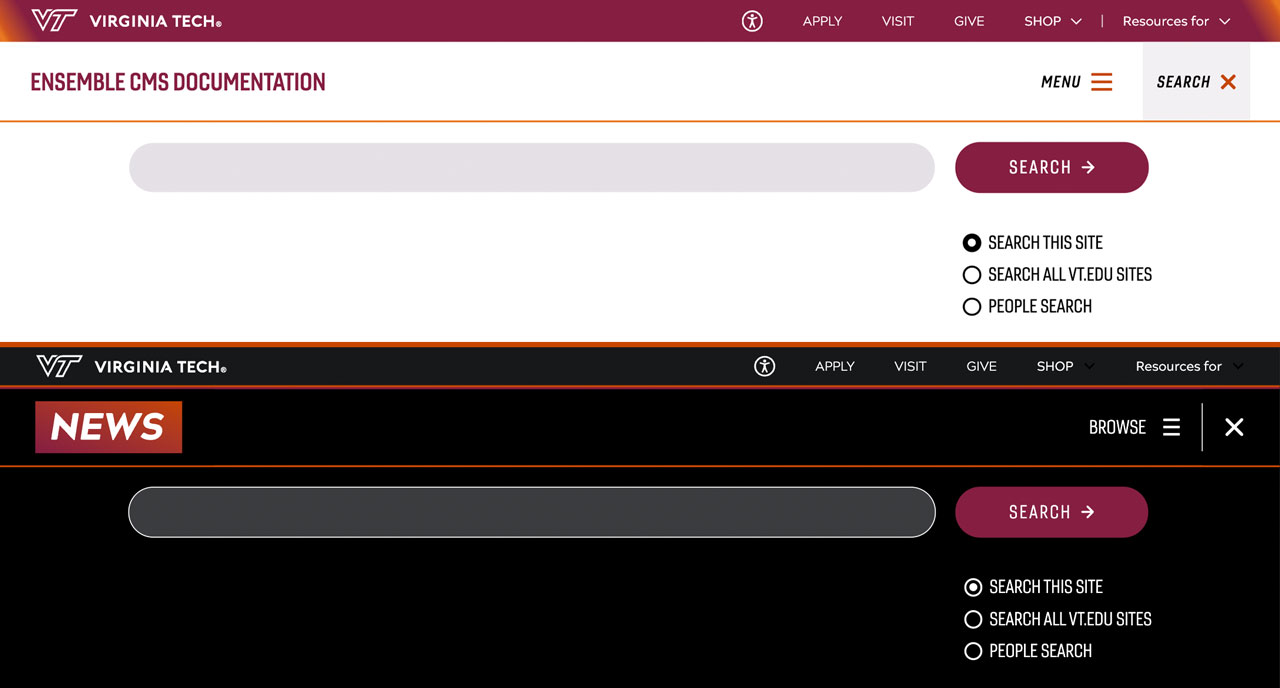

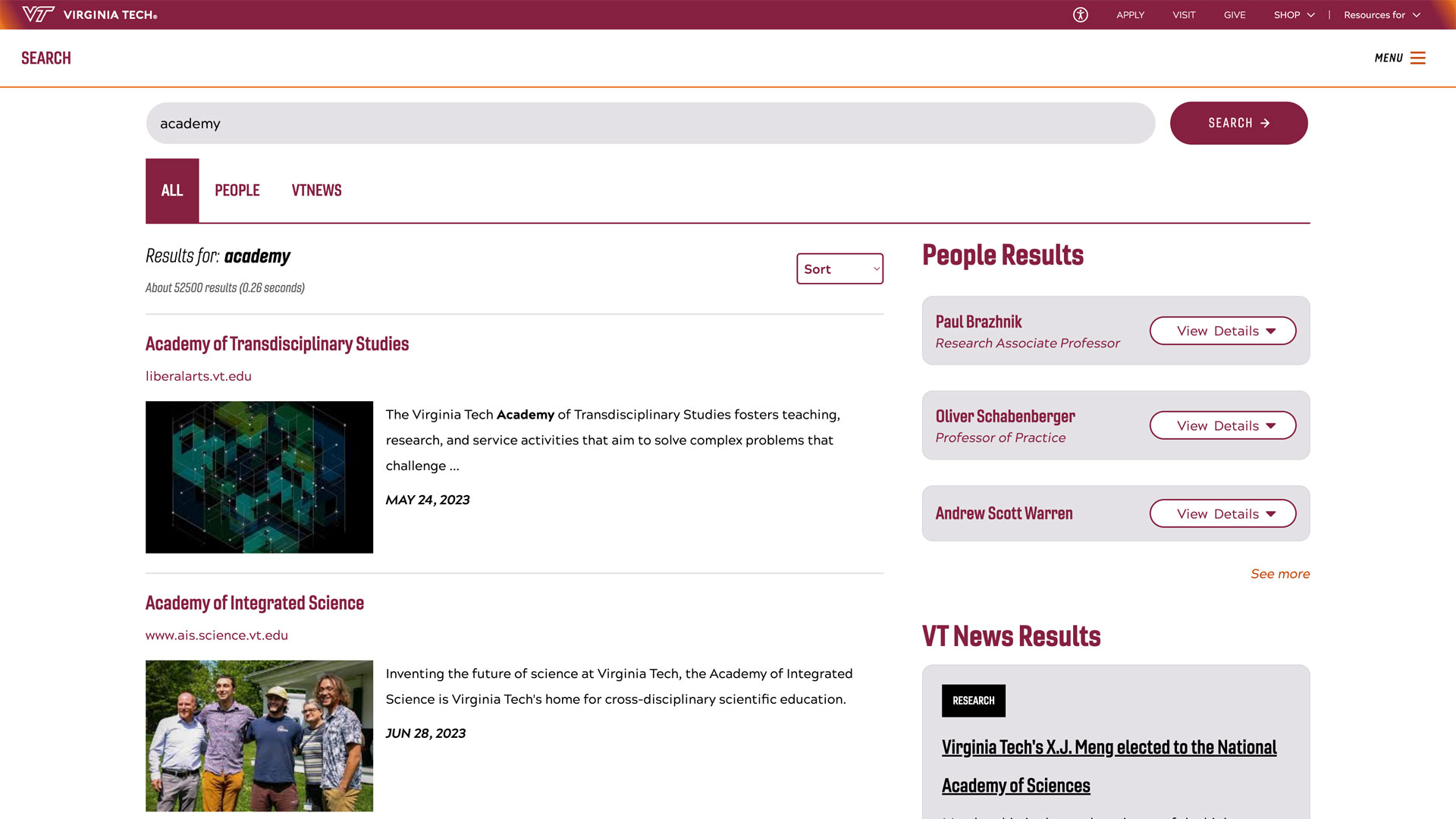

Kevin Shinpaugh gives a tour of the new compute cluster.

The Biocomplexity Institute’s long-anticipated new data center, built with an internal investment of $5 million, promises to bring the institute’s compute power to an unprecedented speed and scale.

Built to meet the current and future growth of the institute’s research computing needs, the new data center adds 2 megawatts of electrical power capacity. The first phase of this project will provide the capability to power and cool 20 racks of high-density compute racks with up to 50 kilowatts per rack of power consumption. This is more than 10 times the capability of previous data centers, which were out of space and cooling capacity.

The new data center will allow the institute’s researchers to perform increasingly complex simulations of massively interacting systems. Aiming to be global in scale, such simulations help researchers understand the underlying infrastructure of how social networks affect the spread of certain beliefs or how epidemics like Ebola spread through populations.

"Creating a data center with this much computational power is challenging, not only from a hardware standpoint but also in the amount of energy required," said Kevin Shinpaugh, director of information technology and computing services at the institute.

For example, large data centers generate massive amounts of heat, which must be mitigated to keep clusters at their operational peak. Without chilled water cooling, temperatures can exceed 125 degrees Fahrenheit, which would easily damage the compute cluster. To ensure maximum functionality, large racks outfitted with the MotivAir ChilledDoor Rack Cooling System allow the institute to maximize its computing power within a small footprint.

Driven by NASA technology

Two main compute clusters have been teamed to power the institute’s large-scale simulation experiments. Shadowfax, the institute’s hybrid-core cluster, has been paired with the 606-teraflop Discovery cluster. Previously used for climate simulations by NASA, the Discovery cluster, formerly known as Scalable Unit 8, was donated to the institute by NASA Goddard Space Flight Center in 2016.

Previously owned by the NASA Center for Climate Simulation (NCCS) at Goddard Space Flight Center, and used for a variety of climate, Earth, and space science modeling, the Scalable Compute Unit 8 (SCU8) portion of their cluster was donated to the institute in 2016 via the Stevenson-Wydler Act.

“We’re excited to be able to partner with Virginia Tech and see some of our computing hardware continue its useful life in another dynamic, scientific research environment,” said Bruce Pfaff, NCCS lead high-performance computing system engineer and Virginia Tech alumnus. “It’s fitting that SCU8 from our ‘Discover’ cluster is now part of the VT Biocomplexity Institute’s ‘Discovery’ cluster and is continuing to support large-scale global modeling.”

The new data center’s chilled-door technology is unique on the Virginia Tech campus. Chilled-door water cooling is a less expensive, more targeted approach to cooling data center hot spots than forced air or other methods. Connected to Virginia Tech’s chilled water system, the data center continuously pumps water through a heat exchange system that returns the water to a 2500-gallon underground buffer tank. The cooling system includes many failsafes. During cool to cold weather, the system can take advantage of free cooling provided by a rooftop dry cooler. This reduces the costs and energy required to keep the data center cool during these periods and provides additional redundancy in case of an emergency.

DURIP: An investment in the future

One of the newest projects that will make use of the new data center has been made possible by a grant through the Defense University Research Instrumentation Program (DURIP). This $200,000 grant will enable the institute to acquire a UV 300 from SGI/HPE for high-performance in-memory computing. The system comprises one SGI UV 300 system totaling 9 terabytes (TB) of shared-memory and 216 Intel Xeon Processor E7 v3 cores.

“The movement of people, goods, and services across international borders has been steadily growing during the past couple of decades. In tandem, we are witnessing often unpredictable changes in the political, ecological, and environmental landscape across the globe,” said research scientist Sandeep Gupta. “We need a global perspective on social and epidemiological problems like the spread of disease.”

DURIP helps the Network Dynamics and Simulation Science Laboratory develop global-scale social simulations. This significantly reduces turnaround time and propels research growth in this direction.

The extensible, scalable data center provides research with ready access to the high-density compute resources needed to store and interpret large data sets and make them available via web-based portals. Built with an eye toward future expansion, the new data center allows the institute’s researchers to set the pace for advanced modeling and simulations.

Tour new facilities at the institute Open House

To celebrate the new data center and new additions to the Genomic Services Center, the institute is hosting an Open House on May 22. Speakers from sponsors Dell and Illumina will join researchers to discuss the latest technologies in the fields of high-performance computing and genomic sequencing.

Registration is open now — meals and an invitation to the post-event reception are included.

Written by Tiffany Trent